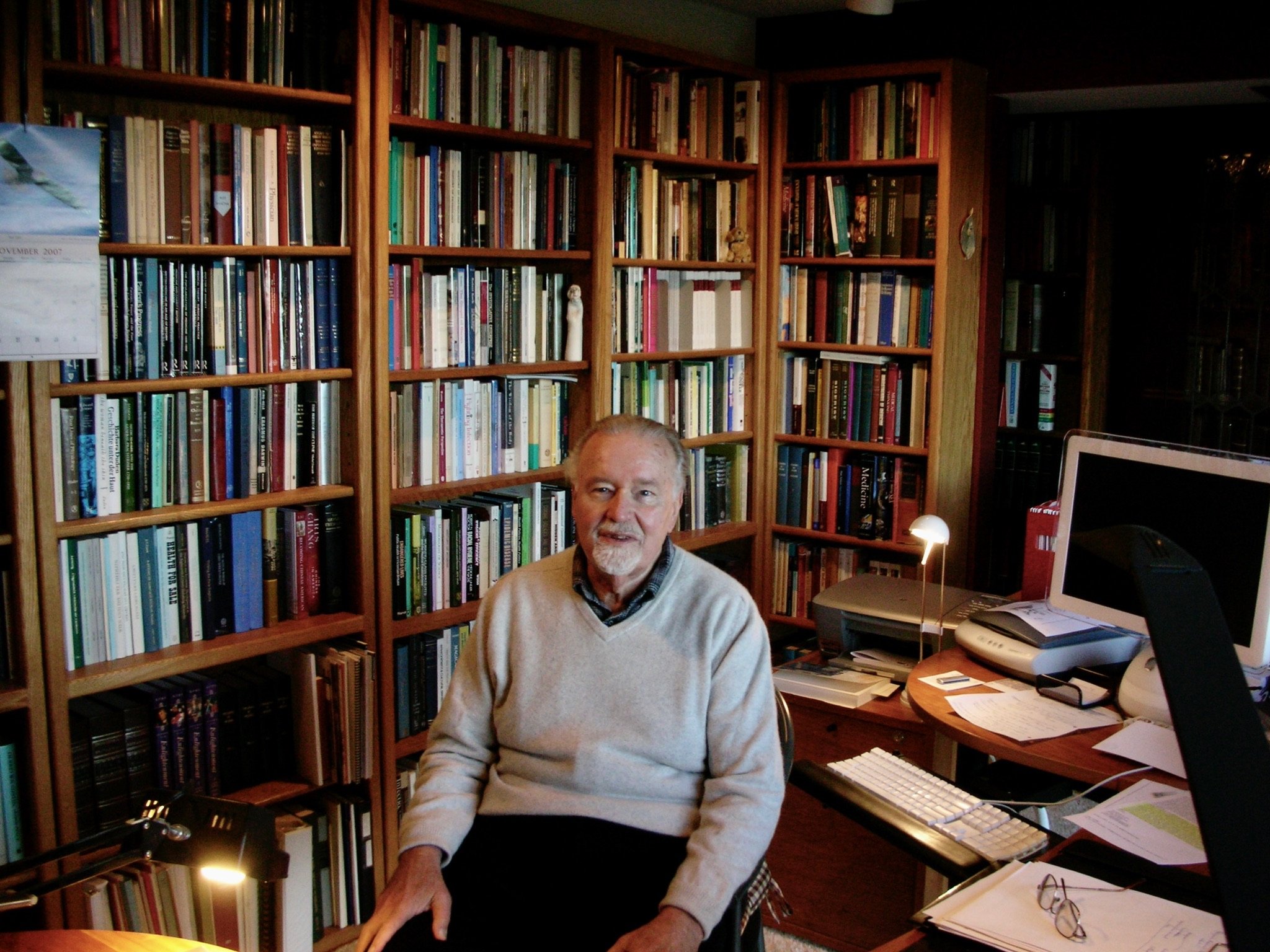

Guenter Bernhard Risse, distinguished historian of medicine and Professor Emeritus, passed away on February 15, 2026, at the age of 93. He died peacefully at his home in Lincoln, California in the midst of his family, after a long struggle with Parkinson’s Disease. (Obituary)

He requested this retrospective of his life be published after his passing in gratitude for all the people who influenced his life and assisted him in his academic career.

IN GRATITUDE: A BRIEF RETROSPECTIVE

Guenter B. Risse

In a recent publication, Aging Thoughtfully: Conversations About Retirement, Romance Wrinkles and Regret, authored by University of Chicago faculty members Martha C. Nussbaum and Ernst Freund, the authors discuss and support the traditional penchant for retrospection that infects those of us who are aging and wish to “find wisdom in the wrinkles.” My intent, however, is to express gratitude to a group of people who decisively influenced my professional life. Perhaps looking backward and from a notoriously foggy and selective memory and crafting a coherent narrative will help me achieve a measure of self-understanding. Moreover, the story lends meaning and positive feelings to one’ own life at a time of physical decline and social privations in the midst of the corona virus pandemic afflicting our planet.

While selfish, such an endeavor often turns altruistic and reaches out to the future, hoping that the memories, insights, and even lessons could be of value for those left behind. Leaving a legacy to the next generation is, as Nussbaum and Freund assert, a vehicle for posthumous remembrance as well as understanding and creative stimulation. In this essay, I wish to stress that the popular notion of being self-made, in total control of your destiny, is a myth. In the course of a lifetime, everybody experiences critical turning points and can easily identify as well as credit persons who proved to be consequential, influencing and facilitating life’s trajectory and eventual success.

Parents: F. Bernhard Risse (1902-1988) and Kaete A. Westernhagen Risse (1911-2009)

Both my parents were born in Germany. My father came from Barmen, an early industrial town on the steep slopes of the Wupper Valley in the Rhineland famous for its textile and metallurgy mills as well as home to the first suspended and electric monorail system in the world, the Schwebebahn. In fact, my paternal grandfather Josef (1854-1948) was a weaver working at a nearby plant under deplorable conditions. The youngest surviving male in a family of ten children--two older brothers had died at the battle of Verdun—my teenaged father barely managed to complete his primary schooling before looking for work to support the extended household. Luckily, he qualified for an apprenticeship at a German import-export firm, Staudt & Co. with multiple subsidiaries in South America including Argentina, Uruguay, Chile and Paraguay. Officially registered in 1887 by businessman Wilhelm J. Staudt with headquarters in Berlin and Buenos Aires, the company initially offered European textile products in exchange for raw materials such as sheep wool, cotton, and cowhides.

Neutral during the conflict that devastated Europe, Argentina’s economy rebounded after WWI because of an increase in foreign trade. Like other German enterprises, Staudt & Co. now also offered industrial products such as iron and steel products, notably tools and machinery. As part of his job, my father agreed in 1922 to go overseas and work at the Buenos Aires affiliate. By this time, the resident German community in Buenos Aires was not particularly hospitable to newcomers, but the trading houses that had selected and recruited their employees through the main offices in Germany were quite patronizing and supportive; they even encouraged return visits to the homeland. Indeed, my father was able to make a brief trip to see his parents in 1926. Eventually, half of them decided to permanently leave Argentina as conditions and opportunities in Weimar Germany improved.

From several accounts, my father was a model employee. Blessed with an ironclad contract, he was privileged worker insulated from the anxieties afflicting most German immigrants. With an extraordinary facility for foreign languages, he added Spanish, French, and later English to his international portfolio. Meticulous, self-taught bookkeeping followed. On rare occasions, he would reminisce about periodic sales trips away from Buenos Aires, to the interior of Argentina. Carrying two extremely heavy suitcases full of sample tools, he used trains and buses to make visits to Staudt subsidiaries in Rosario, Santa Fe, and Concordia, Entre Rios as well as faraway German settlements, especially in the provinces of Entre Rios, Cordoba and Santa Fe. To locate potential customers, he was also keen to use local telephone guides and check on potentially Germanic listings.

My mother hailed from Berlin, capital of Germany and one of the most famous European cities. In sharp contrast to my father’s early trajectory, she emigrated in March 1924 with her family at the crest of the German immigration wave to Argentina. Endowed with considerable technical skills—he was an expert locksmith by trade, her father, Paul Westernhagen (1884-1974) had fought during WWI in Germany’s Reichsmarine, barely avoiding several deadly naval battles. Retired from active service, he became a small shopkeeper in Berlin, ruined by inflation in the early 1920s. Like other middle-class Germans facing an extremely bleak future in their homeland, he considered emigration. In florid letters, his older brother, a chemist already living in Buenos Aires, extolled the attractions of his ammonia-producing factory, promising work, partnership, and lodging. After a long and stormy sea voyage, the anxious and seasick Westernhagen family, father, mother and one sister, was allowed to disembark with their belongings after their sponsor finally showed up hours later. They all climbed into a streetcar for a trip to the outer suburbs and open fields, ultimately ending at a primitive hut that could only be reached by a muddy footpath. Living in abject poverty with his girlfriend and a black child, the unmarried uncle apologized for the conditions and toxic fumes, hoping that by now extremely disappointed newcomers would provide the necessary effort and capital to make improvements.

Suffering greatly for months, my mother’s family managed to extricate itself from this nightmare, returning to a northern Buenos Aires neighborhood in 1925 and renting a property that was converted into a boardinghouse. While my grandfather proved adaptable and turned to the manufacture of safes and metal furniture, the women were busy spending their days cooking, cleaning, and washing. Subsequent moves to another property located further south of the city slightly improved their finances but failed to lift the burdens of full time menial domestic work. Unfortunately, my teenage mother also never got a chance to go to secondary school; her parents found some occasional private tutors who volunteered to improve her Spanish language skills. Like many other newcomers, the shock of exchanging a bourgeois life in a key European metropolis for the desolation, poverty, and insecurity in sparsely populated suburban enclaves created an unflattering perception of Argentina: it was the Affenland (monkey land), primitive, full of tricks and broken promises. For the rest of her life, my mother would remain a proud German citizen extolling her cultural superiority over the locals and traveling on a German passport. In her vocabulary, the word hiesiger (local) permanently acquired a stigmatizing, negative quality.

Visiting German passenger ships temporarily anchored in the harbor of Buenos Aires was then a popular forum for new and lonely immigrants to socialize. Attending afternoon teas enriched with music and dancing allowed young people to exchange glances and share their experiences under the stern supervision of their parents. Quenching their nostalgia for German life, language, and music, they congregated and relaxed after weeks of woes and hard work, my parents first met briefly in 1926 and then again in 1928. Both were quite attractive people; still a teenager and to the chagrin of my grandparents, my mother was often the target of instant marriage proposals given the scarcity of German-born women among the immigrants. She still did not understand much Spanish. After a brief courtship under the scrutiny of chaperons, they became engaged on December 24, 1929, their wedding took place a few months later, March 8, 1930. In an autobiographical essay written before her death, she wrote with pride that she had finally managed to become an independent German Hausfrau. Indeed, the kitchen remained her absolute domain, albeit expenses were kept under a strict budget. Menus and three regular meals a day were part of her routine. She made her daily rounds through the neighborhood to procure fresh ingredients. Mending clothing and knitting sweaters were meant to save money. Assistance was declined, except the daily shopping and dishwashing chores.

In 1933, a year after my birth, my father left the Staudt Company, ready to partner with a representative of foreign pharmaceutical firms. Exploiting his linguistic skills, “small pharma” imports from laboratories in France, Germany, and the US became the focus of his new commercial enterprise. His timing was perfect: Juan B. Justo had just been elected President of Argentina a year earlier, inaugurating a period of international trade and prosperity that lifted the country out of the Depression. Dedication and hard work came to provide our family with enough resources to rent a modest apartment in the center of Buenos Aires, and join the local middle class. Although retaining their German cultural identity, my parents did not actively participate in the life of its ethnic community. They refused to join clubs and societies. This also allowed them in the 1930s to evade the growing presence and influence of national socialism in Buenos Aires. An extended business trip in 1938 that also included visits with family members further dampened their lingering nostalgia. Already apolitical, the encounter with life under Hitler left an indelible mark on all of us. Moreover, on Christmas Eve 1938, a new addition to the family, my brother Edgar, would made all previous plans obsolete. After our return we had moved, to an apartment house especially selected for its proximity to the German Hospital where my mother expected to deliver.

At the onset of WWII, both my parents clearly realized that the chances of ever returning to live in Germany were over. The commitment to a life-long stay in Argentina crystalized around 1940. Moreover, threats to blacklist my father’s pharmaceutical business prompted him to become an Argentine citizen: Francisco Bernardo. With two children it was time to get out of urban apartment living and move to a suburban dwelling with garden. We fled Buenos Aires for Florida, a northern town linked by electric train service. After renting for a while, my father purchased a vacant lot and found a contractor to build a new house in 1942. For the next decades, we lived a comfortable middle-class life. Wisdom was dispensed in the form of traditional proverbs. Frugality was key, spending devoted to essentials. We did not own an automobile; restaurant visits limited. There was no desire to impress others. Household expenses were kept on a strict, no-frills budget, clothing was mended. Constant encouragement and material resources were directed towards the goal that had eluded my parents in their formative years: more schooling. We were given monthly allowances for books and materials, and discouraged from chasing part time jobs that could prove distracting. There was considerable pressure to excel and get good grades. Although public education was free in Argentina, private primary schooling and supplemental classes were mean to expand our horizons. When I finally obtained my medical diploma in May 1958, my parents were ecstatic: “we finally have a doctor in the family.”

Health scares: Carlos Neumeier and Ricardo V. Rodriguez, Buenos Aires, 1937-1940

A decision from my parents in 1937 sending me to a private kindergarten backfired six months later when I contracted a serious case of whooping cough that became complicated by bilateral pneumonia. Since I was critically ill in the pre-antibiotic era, a local pediatrician, Carlos Neumeier, came to our home and spent two nights at my bedside with my parents, employing one of the new imported sulfa drugs that saved my life. A long and slow period of convalescence followed, including an extended time at sea in the spring of 1938 during the trip to Germany.

In the early 1939, I came down with a sudden and painful abdominal condition and my parents took me to the nearby German Hospital emergency room for evaluation--we still lived at the Arenales Street apartment in the Palermo district of Buenos Aires. After a thorough examination and X rays, the diagnosis was acute appendicitis and was officially admitted and readied for surgery. My father was not convinced and requested a second opinion from Ricardo V. Rodriguez, a prominent Buenos Aires surgeon who promptly showed up, checked me out and recommended my immediate discharge, explaining that surgery was not the answer. Risking acute peritonitis and possible death, I went home with my parents and thankfully recovered under his dedicated care.

Fredericus Schule, Vicente Lopez: Karl Schade and Virginia B. Kreutel,1940-1945

My parents supported the contemporary notion of a dual German-Argentine education. In 1939, I spent my first year of elementary instruction at the prestigious and traditional Germania Schule located in the center of Buenos Aires. Founded in 1903, the school followed the approved six-year curriculum common in all Argentine establishments with additional afternoon sessions for teaching German language and literature. Although I cannot remember many details except a lack of friends and long bus rides in heavy traffic, the institution lost favor with my parents who considered it elitist, stuffy, as well as expensive and looked forward to a change of school after resettlement in suburban town of Florida. Our new address on Juan B. Justo Street seemed a good omen: a decade earlier, this former president’s trade policies had been a godsend for my father’s business.

But where to go? Just a mile away, German families living in the adjacent towns of Vicente Lopez and Olivos, had already come together to establish their own dual tract institution in 1935. Its name Fredericus Schule honored the memory of Frederick II, King of Prussia (1712-1786) a complex and contradictory figure often known as “the Great.” Based in good measure on his military successes and cosmopolitan approach during his rule, Frederick was viewed as a patron of the 18th century Enlightenment, notably art, music and literature as well as a strong leader and precursor of German unity. Therefore, in the 1930s, adherents of Germany’s new Führer sought to legitimize his rule by drawing favorable comparisons between them.

The school was located on an acre of prime land used for gardening and botanical studies. Some fields were devoted to sports, notably soccer and gymnastics. Volunteering parents cooperated in the organization of year-around festivities, national anniversaries, musical and theatrical performances, craft fairs and graduation ceremonies, transforming the Fredericus Schule into a veritable cultural center under the efficient direction of Karl Schade and Virginia Bruno de Kreutel. Born in Germany, Schade was among the first teachers selected in 1935 to devise and implement a German curriculum for primary education at the school. He also edited a useful handbook of German poetry for class use. Demanding but fair, he was the institution’s Director during the five years, 1940-1945, when I attended, from the second to sixth year of primary education. In his free time, he was an avid mountain climber, eventually participating in a 1947 expedition that made an attempt to reach the top of the Aconcagua, American’s highest peak.

Director Kreutel was in charge of the Scholl’s official Spanish course of studies. She managed to obtain official approval from local and provincial educational authorities. She was also keen in cultivate good relations with the local government in Vicente Lopez. Both displayed superb leadership in a difficult period created by the events of WWII. Tensions and deep divisions arose between ideologically committed Nazi teachers, parents, and students known as the “zackigen” (jagged like the swastika symbol, physically fit and smart) versus the apolitical “runden” (round, plain, flabby, evenhanded). Being part of the latter, the schism came to afflict our family’s social relationships, particularly as the war took a decidedly unfavorable turn. Sadly, in March 1945, as the fighting was nearing an end in Europe, the Argentine military government then led by President General Edelmiro G. Farrell and Vice-President Juan D. Peron, finally abandoned its neutral position and under pressure from the United States declared war on Germany. Moreover, the authorities issued a decree proclaiming all German assets in Argentina to be “enemy property” and thus ordering their seizure. Included in this unprecedented and unconstitutional property grab were not only schools like the Fridericus Schule but banks, business firms, associations, and sport clubs. By November 1945, my class was the last to graduate before the institution was forced to close its doors forever.

Colegio Nacional de Buenos Aires: Osman Moyano and Jose Maria Monner Sans, 1946-1951

Even before my 6th grade graduation, my father had already decided that I should apply to the Colegio Nacional de Buenos Aires, then the oldest and most prominent institution for secondary education in the country that only became coed in 1959. Originally founded by the Jesuits next to the Church of San Ignacio in colonial times, the College was located within the perimeter of the so-called Manzana de las Luces, Buenos Aires’ Enlightened zone. Later, it became a lay institution administered by the local University and thus the educational center for the creation of what a recent author, Alicia Mendez, has coined a “meritocratic elite” that included presidents as well as other high government officials, intellectuals, artists, scientists, as well as diplomats, judges and industrialists. Indeed, CNBA was frequently perceived as a center of Argentine culture, its exceptionality resting on historical traditions. All six years featured the study of Latin. Like all other public institutions, education was free: students were only responsible for the cost of books, supplies, meals, and transportation.

My father’s rationale for insisting that I apply to the CNBA was not influenced by economic considerations or dreams for social advancement. What worried him was the progressive intellectual degradation occurring in Argentina since the military revolution of 1943. An energized nationalism and militarism coupled with religious indoctrination sought to reform public education and create a “new Argentina,’ prompting the firing and voluntary departure of thousands of notable scholars and teachers, including several presidents and two Noble Prize winners. With the quality of instruction and research already declining, the CNBA appeared to be less compromised than the university system it was part of. Many liberal teachers managed to hold their secondary posts. While similarly opposed to the military government, CNBA’s socially heterogeneous teenagers, drawn mostly from urban centers in Argentina, failed to exhibit the same active resistance towards Peron’s government rules than their older university students prone to protests and strikes that reached new heights in the riots of October 1945.

Thus, together with nearly 1,500 yearly applicants, I spent several months in the fall of 1945 with a personal tutor in preparation for taking a highly competitive written examination designed to test my knowledge in four main subjects, mathematics, Spanish, history, and geography. The quest was successful: to the joy of my family, especially my father, I received my acceptance letter. About 200 young men were admitted each year and placed in eight divisions, each composed of 25 students. The school functioned in two shifts, one meeting in the morning and the other in the afternoon. I chose the afternoon shift, since I lived in a suburb and faced a full hour of commuting using trains, subways, and doing a fair amount of walking. CNBA was housed in a monumental neoclassical building with white marble staircases, vast courtyards with water fountains, wood-paneled hallways, ornate library, assembly room, and swimming pool, located within the historical center of Buenos Aires.

In February 1946, just before starting my first year at the CNBA, General Juan Peron won the popular vote and was legitimately elected president. After an extended period of labor unrest and crippling strikes, he assumed the office in June. Perhaps reflecting the renewed emphasis on compulsory Christian instruction after the 1943 military revolution that sought to establish a “New Argentina,” the CNBA began listing religion among the ten subjects to be studied during the first and second year courses.

Just before classes ended in November 1946, the Colegio also inaugurated his new director, Osman R. Moyano (1890-1952), a former veterinary physician, ex-student, and teacher at the institution. Humble and austere, Moyano was deeply impressed by CNBA’s mystique; he was in love with its aura of erudition, the solemnity of its auditorium, library, and majestic hallways. He wowed to protect its integrity, the dignity of its professorial and auxiliary staff as well as the highly selected student body. Ironically, Moyanoowned this appointment to an old family friend, the surgeon and now despised Peronist politician Oscar Ivanissevich, who had been installed at the helm of the autonomous and more liberal University of Buenos Aires by the new government.

Moyano’s educational philosophy was perceived as politically correct since it made a clear distinction between mere instruction--transmission of current knowledge--with actual education, a more comprehensive and desirable approach that promised to “shape souls.” The latter mode demanded a closer relationship with students and was designed to also convey moral values to adolescents towards the formation of future informed citizens. For Moyano, the traditional barriers between professorial chair and student bench needed to be lifted to create a more harmonic learning environment.

One of the highlights during the first five years were courses given by Jose Maria Monner Sans (1896-1987) that dealt with aspects of the Spanish language, from style, syntax, spelling, literary theory, poetry and history. A prominent author and university leader, Monner Sans was a demanding teacher who had published an early monograph about history as a literary genre. Science and data collection were the new goddesses; everything was susceptible to measurement. Admitting that objectivity was an illusion, Monner Sans emphasized history’s subjective nature, since all our narratives of the past were based on collected information driven by conscious or subliminal sympathies and intentions, a position that helped shape my own ideas on the subject.

As my course of studies entered its sixth and final year, political pressures mounted. Graduation in late November 1951 could not come soon enough. To be sure, the Colegio remained a mostly apolitical island surrounded by by a sea of faithful Peronistas. Provided with a bust of Peron’s spouse, Evita, for prominent display in the building, Moyano quietly hid the statue out of site in an obscure corner of the building. In early May, CNBA students protested in front of the building housing the distinguished newspaper La Prensa, which had been taken over by the autocratic government, only to be arrested and dispersed. With a general election, President Peron’s supporters organized numerous rallies in support his reelection. Since the aging Vice President, Hortensio Quijano, was in failing health, support for the candidacy of First Lady Eva Peron to become the president’s running mate gained momentum. At a rally on Plaza de Mayo on August 22, President Peron was expected to reveal his final choice. Organized by labor unions and the Ministry of Education, the large gathering required the compulsory presence of students and teachers from all major schools. Our CNBA delegation, fronted by Rector Moyano, occupied a prominent place next to the old Cabildo building. After Peron appeared on the balcony of the government building, the Casa Rosada, the current Education Minister, Oscar Ivanissevich, introduced Eva Peron as “Mrs. Vice-President,” prompting loud boos from students of our CNBA delegation. The crowd fell silent, the presidential couple and their immediate retinue directing their angry gaze towards the dissenters, much to the dismay and embarrassment of Moyano. Within a few minutes, members from the mounted presidential guard surrounded our group. Blandishing their sables, these men on their excited horses simply galloped into the rows of fleeing CNBA students, indiscriminately chasing and hitting everyone with the flat side of their weapons. Luckily, amid the confusion, I found myself near a subway entry, thus evading the onslaught and rushing home, but some of my classmates were badly hurt. Peron never revealed his choice for the presidential ticket but there were rumors that his wife was ill. Indeed, she died of cancer nearly a year later. Moyano was summoned to the Ministry but retained his job thanks to his close personal ties to Ivanissevich. No student was punished but we came under greater scrutiny by the afternoon prefect, Mr. Amoroso, a short and elderly sympathizer of the current regime who zealously policed the school’s corridors threatening to compose incriminating reports or recommend suspensions that would prevent our graduation. At a solemn ceremony, I received my bachelor diploma in December 195i. Moyano called it a fiesta de hogar, a home celebration.

Indeed, CNBA had been my intellectual shelter and Moyano’s quiet leadership — he coined it his “apostolic mission” - managed to protect it by paying lip service to the outside forces of Argentina’s fascism. He died a year later. The most critical and formative years of my adolescence were over. Since my subsequent career unfolded in the United States, I never developed that sense of belonging, fellowship, and solidarity characteristic of most CNBA graduates. Like the local University, the Colegio remained an independent and rebellious center for the opposition to Peron’s Argentina. In fact, our struggles were merely a prelude of further bloody clashes with authoritarian military leaders that occurred in the decades ahead.

Medical School: Luis Dellepiane and Juan C. Gonzalez Peña, Buenos Aires 1952-1953.

At the start of my first year in Medical School on April 1, 1952, I signed up to study topographic and functional anatomy in the Department headed by the notable surgeon traumatologist Professor Luis Dellepiane. Because of open enrollment, the large number of students admitted that year forced Dellepiane to create a auxiliary teaching team of honorary “monitors” drawn from selected students who had successfully passed the first partial examination. Selected for such a post, Disector Ayudante Voluntario, I carried out my own dissections and demonstrated my findings to other groups of students, under the supervision and encouragement of my section chief. Professor Juan C. Gonzalez Peña. The latter became an inspirational leader and personal friend any incoming medical student should have. This positive experience allowed me in 1953, during my second year, to remain in the Anatomy Department and continue to function as a member of Professor Dellepiane’s School of Dissectors, dissecting for and teaching theoretical subjects to small groups of incoming students.

Medical School: Bernardo A. Houssay and Miguel R. Covian, Buenos Aires, 1953-1954

During Year 2, the primary field of studies was physiology. The Department was ably represented by Jose B. Odoriz (1908-1971), one of the last collaborators of the 1947 Nobel Prize winner Bernardo A. Houssay(1887-1971), one of the most prominent and influential 20th century Latin American scientists, a key spokesman for modern biomedicine and defender of academic freedom. Houssay’s intellectual prominence and liberal political leanings, however, had brought him into conflict with the military dictatorship that ruled Argentina after 1943. Dismissed from his university posts, he was forced to shift his research to a privately funded laboratory in Buenos Aires, the famous Instituto de Biologia y Medicina Experimental in Palermo from 1944 until the fall of General Peron’s regime when in 1959 it was affiliated to the University of Buenos Aires.

Odoriz’s inspirational lectures and an acquaintance with another Houssay associate, Miguel R. Covian(1913-1992), spiked my interest in biomedical research. The latter had recently spent two years at Johns Hopkins University studying the physiology of the hypophisis, and upon his return established a experimental laboratory of neurophysiology. With Covian’s encouragement, I started attending weekly sessions at Houssay’s Instituto on Costa Rica Street in Palermo devoted to the presentation of research conducted by other Houssay’s collaborators and students including Braun Menendez, Rapela, and Brandt.

During Year 3, my preclinical studies shifted to medical semiology under the chairmanship of Professor Roque Izzo, taught at Buenos Aires’ most prominent sanatorium, the Hospital Municipal Enrique Tornu, named for a notable Argentine physician and climatologist, a graduate of the Paris Medical School who specialized in lung diseases. Since 1904 this venerable institution, a vast complex of pavilions, administrative facilities and gardens, had not only collected local sufferers of tuberculosis, notably domestics and day workers, but also housed individuals sent from distant Argentine provinces. Indeed, flooded with immigrants like other modern urban centers around the world, Buenos Aires continued to have its share of infected patients. Since the basement of the pavilion housing provincial cases was reserved for experimental studies, I was encouraged by Gil Mancini, an independent docent, Covian and others to conduct a series of studies using guinea pigs, an animal frequently employed for diagnostic purposes and thus in great supply. Indeed, Covian was already working on the neural basis of thirst and physiology of appetite at Houssay’s Instituto employing Norwegian rats, and had published a preliminary paper on the subject in 1952. He was curious whether his findings could be confirmed employing another animal model. With the assistance of a few other students, we tried to follow existing protocols until it became clear that our experimental animals, the guinea pigs, kept perishing from tuberculosis, a not surprising fate in a perennially contaminated institution like the Hospital Tornu.

Medical School, Juan Jose Beretervide /Hospital Juan A. Fernandez, Buenos Aires, 1957-1958.

Year 4 of Medical School was hectic and confusing, spent fulfilling my military obligation at the Argentine Army’s Escuela Profesional General Lemos outside Buenos Aires. After a series of interruptions because of the 1955 military revolts that eventually toppled the current president, Juan Peron, in September of 1955, I returned next year to complete the required year 5 clinical work, covering internal and emergency medicine, obstetrics and gynecology, pediatrics as well as infectious diseases. An influx of migrants from the poorer Argentine provinces to the capital city for the past decade threatened to overwhelm its health care facilities, notably the decaying municipal hospitals erected during an earlier era when shelter and rest were the only offerings for a possible recovery. Housed in flimsy hovels surrounding Buenos Aires, sarcastically referred to as villas miseria (villas of misery), the poor were exposed to a broad spectrum of contagious diseases, notably venereal. Lacking means to obtain care, they flooded the salas de guardia (emergency rooms), a bonanza for so-called perros (dogs), lowly but loyal medical students like myself who staffed such facilities and followed orders including multiple shots of the newly available wonder drug, penicillin.

My last year of medical school, from January to March 1957, December 1957 until April 1958, was spent as a volunteer working daily at the municipal Hospital Juan A. Fernandez. Completed in 1943, this modern high-rise building located in the fashionable northern district of Palermo, sponsored the Medical Service of Dr. Juan J. Beretervide (1895-1988), then one of the most distinguished Argentine clinicians of his generation. Chief of the Service in Ward 2 since 1928, professor of clinical medicine, and member of the Argentine Academy of medicine, Beretervide assisted by Drs. Masoch and Lazzari, was instrumental in guiding my interests towards general internal medicine. In fact, he also graciously agreed to sponsor my medical thesis in September 1960, researched and written during my first year of my residency in internal medicine at Mercy Hospital in Buffalo New York in the USA during the years 1959-1960.

Hubert Lando and Angelo S. Deloia, New York. 1958-1960

During my last year of medical school, my father connected with a business partner in New York, Hubert Lando (1886-1968) with the purpose of exploring the possibility of post-graduate medical training in the US. Given the increasingly competitive nature of medical practice in Argentina because of the ever-larger number of post Peron graduates without an equivalent expansion of population and medical facilities, many young physicians faced a bleak economic future. Under such circumstances, obtaining additional training through the completion of an internship in the US could prove advantageous. On a visit to Buenos Aires in early 1958, Lando related that one of his former neighbors—he lived in the northern New York suburb of Scarborough—was a general practitioner now specializing in radiology on the staff of Mercy Hospital in South Buffalo. Since 1951, this institution had managed to create an affiliation with the Georgetown University Medical School in Washington, DC, that brought prestigious faculty members such as cardiologist Proctor Harvey and nephrologist George Steiner to Buffalo for by-weekly conferences and rounds. Yet, In spite of its distant academic connections, private hospitals such as Mercy Hospital encountered difficulties in attracting newly graduating American doctors for its internships and residencies at a time when the American hospital system was experiencing a dramatic expansion. Instead, Mercy Hospital already has already come to rely on several foreign medical graduates drawn from predominantly Catholic countries such as the Philippines, and Puerto Rico.

Mr. Lando contacted Angelo S. Deloia (1906-1988) at Mercy Hospital to explore the possibility of a one-year rotating internship. The inquiry was successful: a vacancy had occurred. Given the lateness—all house staff positions in America start July 1--I was allowed to begin October 1, 1958 giving me enough time to obtain a student visa under the Exchange Visitor Program, resign from a postdoctoral fellowship in the Army Medical Hospital in Buenos Aires and travel by ship to New York. Arriving there in late September, Lando kindly picked me up upon landing, arranged for temporary accommodations in the city, and guided me through the initial cultural shock with special tutorials of American English that would be critical for communicating with patients.

Following a train ride to Buffalo, my first encounter with Dr. Deloia almost never happened: he was at the station looking for a swarthy dark-haired South American instead of a blond young man. Later, however, we managed to bond and his wife, three sons, and grandmother, literally adopted me, making me feel part of the family. Even after Dr. Deloia abruptly left Mercy Hospital a few months later, because of professional disagreements with his partner at the Department of Radiology, this support never waivered. I often spent my weekends at his Buffalo house with his three sons, Toni, Gregory and Steve. They became my American family and played an important role during my early efforts to assimilate.

John J. O’Brien, Anthony C. Constantine and Joseph Kopp, Buffalo, New York, 1959-1960

As my rotating internship entered its final months, I reached a decision to continue my medical training in the US instead of returning home. In doing so, I was following the advice of John J. O’Brien, an internist with cardiology training who was Mercy Hospital’s Director of Medical Education. While I worked 36-hour shifts and nearly 100 hours weekly earning room and board as well as a very low stipend, my internship turned out to be more of a secondary service role with lesser educational and practical value. Most attending physicians sought to bypass the house staff, making their unannounced rounds to see their private patients late in the evenings, and phoning their orders directly from their offices to the nursing desk. Only Dr. O’Brien and his deputy insisted on regular morning reports, and the biweekly conferences. Under such institutional circumstances, a residency in internal medicine under O’Brien, with rotations in pathological anatomy with Anthony C. Constantine and tutorials with Joseph Kopp, another internist with hematological credentials seemed attractive. To the disappointment of my parents who eagerly expected my return, the additional prospects for conducting clinical research on selected Mercy Hospital patients for the purpose of writing my doctoral thesis made my choice easier. By October 1959, I accepted the post of first-year resident in internal medicine.

Meanwhile, thanks to my father’s business contacts in Buenos Aires, I was able to write and sent a series of articles in Spanish for publication in a prominent Argentine medical journal, Orientacion Medica. Arranged in the form of travel notes, the essays provided information about the current situation of foreign medical graduates in the US as well as discuss some topics of interest discussed as meetings of the American Federation of Clinical Research in Atlantic City that I attended as part of my medical training.

By early 1960, however, I came to the realization that my goal of seeing a larger number of patients and be allowed to actively participate in their management could not be achieved in a smaller suburban private hospital. Clinical research for completing the MD thesis faced opposition from the attending physicians; promised rotations except for a stint in the Pathology Department performing autopsies failed to materialize. Moreover, some of my reports, presented at clinico-pathological conferences, exposed diagnostic errors as well as surgical mismanagement with the potential to trigger malpractice litigation, an uncomfortable situation for me since Mercy Hospital’s had a small closed medical staff.

John G. Mateer, Detroit, Michigan, 1960-1961

Searching for alternatives, I came across information about training programs at Henry Ford Hospital, in Detroit. Originally opened in 1915 under the sponsorship of the Ford family and known for its early clinical trials with penicillin during WWII, this institution had flourished since the early 1950s under the leadership of its Executive Director, Robin C. Buerky, one of the top and more innovative hospital directors in the country. Unbound by the Board of Trustees and Medical Advisory Board, Buerky had managed to recruit a number of prominent specialists to the institution’s closed professional staff. Moreover, he accomplished the construction of a new 17 floor Clinical Building formally opened in1955 as well as expanding the wings of the existing hospital in 1957. A Division of Medical Education and Research allowed for the growth of house staff positions, including residencies in nineteen specialties and postdoctoral fellowships. With its academic status enhanced, Henry Ford Hospital had become by the late 1950s one of the most prominent group practice hospitals in America, sponsoring yearly Clinical Conferences in affiliation with the Cleveland Clinic in Ohio, the Ochner Clinic in New Orleans, Mayo Clinic in Rochester, and the Lahey Clinic in Boston.

A trip to Detroit to investigate the possibility of a residency position in internal medicine at Henry Ford Hospital brought me into contact with John G. Mateer (1890-1966), who despite his age remained the Physician-in-Chief of the institution and therefore responsible for assembling its house staff. A Johns Hopkins medical graduate, Mateer was not only a gastroenterologist of note, but also well-known in automotive circles as a respected physician. A personal friend of Henry Ford, he had been called to is side and pronounced him dead from a stroke in 1947. We discussed the history of HFH, his institutional trajectory since 1920, philosophy of medicine, and commitment to medical care. Mateer seemed intrigued about my background and experiences in Buffalo. We chatted about the importance of so-called “functionaI” or psychosomatic disease. In the end, he offered me a one-year residency in internal medicine starting July 1, 1960. Excited, I immediately accepted: my path towards quality medical training had taken a decisive leap forward. Later, after completing my assignments, Mateer allowed me to spend three additional months at the Hospital working from July to September 1961 in a newly established Psychosomatic Clinic for the purpose of effectively complete two years of internal medicine training that seemed adequate for my future professional life outside the United States. Indeed, an expiring exchange visa and anticipated return to practice my craft in Argentina after marriage beckoned. In fact, my bride, Alexandra (Sandy) had been the head nurse of HFH’s psychiatric ward, and I had gotten to know her in November 1960 during a 30-day rotation to assist staff psychiatrists for strictly medical problems.

Daniel J. Bordoli, Martha W. Griffiths, 1962

Following the October wedding and a trip by boat from New York to Buenos Aires, we landed in late November facing another political and economic crisis. There were rumors of an imminent military coup. Before my departure to the US, the current president, Arturo Frondizi, had been elected and assumed power in May 1958. A progressive politician, he had been battling with former Peron labor supporters on the left and conservative forces including the military on the right. Such uncertainties and a veritable glut of new medical graduates looking for employment made it difficult to set up a private practice. In the meantime, without a local license, Sandy tried in vain to land a nursing position in spite of her degree and advanced skills. Contacts with the Ford Motor Company and Kaiser Industries in Argentina were unsuccessful. The option of moving away from Buenos Aires promised few rewards. The provinces were impoverished; all my foreign training and experience would be wasted. Temporarily living with my parents, we also faced an acute shortage of housing due to high demand and frozen rents. The unusual hot summer triggered frequent power outages leading to a dearth of water. My previous mentors. Including Dr. Beretervide’s Medical Service at the Hospital Fernandez shared their similar contemporary frustrations, recommending a return to the US. The only alternative that could potentially be profitable was to move to the oilfields of Comodoro Rivadavia in Patagonia, a lonely outpost far away from cosmopolitan Buenos Aires.

Calling on one of my former friends from Medical School, Daniel J. Bordoli, I managed to obtain a temporary position at the Centro Gallego de Buenos Aires, a prominent local cultural center serving a population with ancestry from the region of Galicia in Spain. My task was to replace vacationing staff physicians for the summer months of January-February 1962. Bordoli was also kind enough to allow me to handle his private practice while he was away, but without prospects for a steady job and income we reached the difficult decision to consider returning to the United States. This determination, reached during our first Christmas together, devastated our families, notably my parents, and cast a pall over all our futures.

The Exchange Visitor Program stipulated that previous visa holders remain two years outside the US before returning for another sojourn, but there was the possibility for a waiver of its requirement. Since I was married to an American citizen, grounds for the waiver could be framed as creating exceptional hardship to that person. Such a document would initially be processed by the Office of Cultural Exchange in the State Department, and if successful, forwarded to the Immigration and Naturalization Service at the Justice Department for further disposition, including extensive background checks. Even assuming success, the entire process involving two separate government bureaucracies promised plenty of red tape and a lengthy wait. After some initial time-consuming confusion, the initial application went to the US Immigration and Naturalization Office in Detroit, my last place of residence before leaving for Argentina.

After the petition was promptly returned to us with demands for additional documentation, the situation turned ugly as well as embarrassing. The State Department has a different, much more optimistic vision of conditions in Argentina and our struggles were deemed exaggerated. My father volunteered to compose a gut-wrenching affidavit corroborating the prevailing professional and financial crisis afflicting Argentina, as well as expressing his inability to support us for an extended period of time. Other friends and former colleagues wrote similar letters painting pictures of strife, confusion and marginal living standards they would rather prefer to ignore, least putting on paper. Bordoli dramatically summarized his current professional activities, mostly voluntary, confessing that without his relatives’ assistance, he would be forced to look for another line of work. He was also kind enough in early March 1962 to compose a medical affidavit recommending that my now pregnant wife, Sandy, should consider returning to the US in view of potentially serious complications due to a progressive blood type incompatibility that would prompt an early induced delivery by Caesarean section followed by an immediate and complete blood transfusion of her child.

The developing scenario, reinforced by further turmoil in Buenos Aires including President Frondizi’s overthrow late in March by the military leadership, suggested that we needed to plan for a temporary separation, with Sandy returning home to manage the last months of her pregnancy under careful medical observation while I pursued further possible medical work while practicing as a volunteer at Dr. Beretervide’s clinic. My Detroit in-laws, in turn, contacted their friend and Congressional representative, Martha W. Griffiths (1912-2003), to lobby on our behalf at the State Department for the waiver. A member of the powerful Ways and Means Committee, and an ardent advocate for women’s rights, Griffiths arranged a meeting with Secretary of State under President John F. Kennedy, Dean Rusk. The surprising outcome, communicated to us via a telegram, was that the State Department had agreed to issue me a permanent visa. Suddenly, our eventual return to the United States was assured.

Phillip T. Knies, Columbus, Ohio 1962

Following the decision to return to the US if allowed by the Immigration authorities, I had applied in January to nearly 180 institutions in the US and Canada, A sudden house staff vacancy at Mount Carmel Hospital in in Columbus, Ohio prompted Phillip T. Knies (1907-1980), its Medical Director, to look for a third year resident in Internal medicine for the 1962-63 rotation at that institution beginning July 1st. Professor of Medicine at the Ohio State University School of Medicine, Knies was also Interested in public health issues, notably prevention of imported diseases; he headed the Epidemiology Division at the federal Quarantine Branch in the capital city. After carefully checking my credentials and previous performance as a house officer both in Buffalo and Detroit, he offered me this position in mid-March. Although his mail was slow to reach Argentina, I managed to send him a telegram on the last day of his deadline, March 31, accepting the position. Obtaining this salaried job was key to our return to the US.

Knies proved to be both a kind but also demanding and outspoken medical leader. While focusing on clinical topics, he also enthusiastically supported humanistic perspectives, including my research for a historical paper that was awarded first prize by the Columbus Society of Internal Medicine sponsored by Ohio State University. The competition was limited to house officers from the area’s community hospitals as well as the University Hospital.

Owsei Temkin, Baltimore, November 1962

Since graduation from medical school, I had been interested in pursuing studies in the history of medicine and paleopathology with special emphasis on ancient Egypt. In fact, during my student years, I became the honorary secretary of the Instituto Argentino de Egyptologia under the directorship of Enrique Piñero Jr. with worldwide links to other academic and governmental organizations. In fact, before returning to the USA, I gave a lecture at the United Arab Republic Embassy on April 25, 1962 on the topic of paleopathology in Ancient Egypt, notably the employment of radiology to investigate its mummies. Given the bleak professional future then awaiting most foreign medical graduates in the American Midwest, I conceived the somewhat controversial idea of returning to the classroom and combining my medical credentials with the study of history. Indeed, to eschew a potentially lucrative medical career for another lengthy course of studies and less-well remunerated academic career was considered a folly, even the butt of numerous jokes. To further explore such plans, I arranged a visit to Baltimore for the purpose of interviewing with Owsei Temkin, then the Director of the Institute for the History of Medicine at Johns Hopkins University School of Medicine. At that time, this unit offered a number of NIH fellowships for physicians who wished to obtain PhD degrees in history. A gracious host, Dr. Temkin, who specialized in Ancient Greek medicine, discussed my plans, but given my interests in ancient Egypt, ended up suggesting that the University of Chicago would be a better venue for my studies, notably the Oriental Institute. I was recommended to contact Ilza Veith, a former Hopkins graduate and now professor at the Medical School. After a visit to Chicago in February 1963, an agreement was reached between Professor John A. Wilson from the Oriental Institute, Professor F. Eggan from the Anthropology Department and Veith to cosponsor an interdivisional plan of studies leading to a Ph.D. degree in Anthropology. To support it, Professor Veith promised to request a postdoctoral fellowship.

Walter Palmer, and Henrietta Herbolsheimer, Chicago, Illinois, 1963

Unfortunately, as my plans for a radical career change from practicing internist to graduate student matured, professor Veith announced her departure for the University of California, San Francisco, a move that cancelled the expected fellowship and put my academic future in limbo. However, Knies’ friendship with Walter Palmer (1896-1993) a famous American gastroenterologist and Richard T. Crane Professor Emeritus at the University of Chicago Medical School allowed me to obtain a temporary medical license to practice medicine in the state of Illinois as well as recommend me for a full-time paid position at the University Health Service at that institution headed by Henrietta Herbolsheimer (1913-1999). This post also allowed me to receive an Assistant Professor appointment at the Medical School, making me both a faculty member and a graduate student in the same institution.

An early advocate for women’s health and epidemiology as well as an associated professor of medicine in the Medical School, Herbolsheimer’s support and help in scheduling student appointments between my scheduled classes became crucial. In addition to some tuition waivers, this staff appointment allowed me to finance my studies towards a Ph.D. degree from the History Department starting in the fall of 1963. However, as a humanist I shared in a September 16, 1963 letter to Dr. Knies, the growing emphasis on basic biological science in patient care: “the cooler winds of science are overcoming the warmer human understanding and reassurance,” a trend I wished to highlight in my future historical work.

John A. Wilson, Lester King, and William H. McNeill, Chicago, 1963-1969.

I started my academic studies at the University of Chicago in the fall quarter of 1963 at the Oriental Institute part time by taking a course in the archeology of ancient Egypt under the supervision of professor John A. Wilson (1899-1976), a prominent Egyptologist and successor of James H. Breasted. Subsequent course work on ancient Near East culture, hieroglyphics, and Middle Egyptian texts followed. By early 1966, the issue of a topic for my MA dissertation arose. Since all extant medical texts had already been translated, what could be considered original work? A plan to participate in an excavation project at Saqqara in Egypt being conducted by the prominent Egyptologist Walter B. Emery near the suspected tomb of Imhotep, the ancient god of healing, appeared promising. Since Wilson traveled each winter to Egypt as part of the University of Chicago’s bibliographic work at Luxor, a personal meeting with Emery was arranged in London. Unfortunately, the project’s sponsor, the Egypt Exploration Fund, frustrated by the lack of significant findings and the high costs of removing a vast network of tunnels filled with embalmed Ibis birds, was cancelling the Imhotep dig. Since the Fund retained its concession in that zone, there was little hope that further explorations would occur in the foreseeable future. Moreover, UNESCO’s urgent call to save Nubian monuments from the impending flooding caused by the new Aswan dam, shifted resources and explorers to new projects. With Wilson’s advice--he chaired the UNESCO Consultative Committee for the Salvage of Nubian Monuments--I decided to switch departments, joining the Humanities Division and History Department where I was awarded an MA Degree in December 1966 in both the History of Ancient Near East and the History of Science. My career as an Egyptologist was over, but my lifelong interest in Ancient Egypt’s health and medicine spurred by professor Wilson persists.

A Lecturer in the History of Medicine at the University of Chicago, Lester S. King (1908-2002), a pathologist and Harvard graduate had a full-time day job: he was a Senior Editor of the Journal of the American Medical Association with headquarters in downtown Chicago. Versed in the philosophy and history of medicine, his interests centered primarily on the evolution of medical thought with particular reference to modern times. King was frequently lecturing on the “heritage of problems.” He stressed that while answers changed, problems, and questions remained the same. Since he was working on a book which surveyed eighteenth-century topics, I shifted my research and found the topic for my doctoral dissertation: the transmission and influence of medical theory from Scotland to Germany. A notable bonus of being a Lester King student was the fact that he was a champion of clear and concise scientific writing, mincing no words and heaping criticism on authors who employed jargon and tortured prose. At once, my Germanic style and employment of the passive voice became his favorite targets, transforming my drafts of term papers into puddles of red ink. While feared, periodic pilgrimages to his Chicago office for frank discussion and feedback became valuable lessons for my transformation from a medical practitioner to academic and author.

Chairing the History Department at Chicago during part of my student years, famous world historian William McNeill (1917-2016) had won the National Book Award in History and Biography in 1964 for his Rise of the West

In early 1967, McNeill encouraged me to seek outside financial support from the Josiah Macy, Jr. Foundation in New York headed by John Z. Bowers. The application was successful in March of that year, allowing me receive a Fellowship in History of Medicine and the Biological Sciences renewable for three years that enabled me to end my full-time job in the UC Student Health Service and accelerate my remaining PhD requirements on a full-time basis. In the late 1960s, McNeill as working on a new book that sought to place past human disease outbreaks within an ecological framework. Knowing that I had medical training, we frequently engaged in conversations concerning the spread of major pandemics. He was particularly amazed about the influence of smallpox in the conquest of Mexico and other transcontinental diffusions of contagion that altered the course of history. His book, Plagues and People was published in 1976 and became popular although some mainstream medical historians dismissed it as a work of fiction. With their strong endorsements, both Dr. King and Professor McNeill were also instrumental in persuading the University of Minnesota to offer me a position after earlier efforts to obtain a curatorship at the Smithsonian Institution failed. This development followed my presentation of a paper at the History of Science annual meeting in Dallas, Texas, December 27-30, 1968 attended by professor Leonard Wilson, the head of a new Department of Medical History at that institution.

Owen H. Wangensteen, University of Minnesota School of Medicine 1969-1971.

When I accepted an offer to assume the position of Assistant Professor in December 1969, the world-famous surgeon and recently retired chief of surgery, together with his wife Sally, a medical editor and historian, immediately offered support and friendship. Owen H. Wangensteen (1898-1981) was a world-famous Minnesota surgeon who had attracted a large number of students, including several heart transplant surgeons. After his retirement in 1967, he had been instrumental in adding a floor to the Health Sciences Library to house an impressive collection of rare medical books and start a program of medical history with a graduate program for physicians. The Wangensteen Historical Library of Biology and Medicine officially opened in 1972 and his book on the history of surgery was published in 1978.

When I was notified in September 1970 that my appointment in the new Department of the History of Medicine at the Medical School would not be renewed after June 30, 1971 since my salary came from “soft” money, Dr. Wangensteen immediately came to my assistance. Given the current economic conditions prevailing in most medical schools, I decided to return to private medical practice in Minneapolis by February 197I. Yet, Dr. Wangensteen contacted several university leaders and educational foundations around the country, shopping my academic record to keep me on an academic tract A month later we succeeded with his help to obtain a tenured position at the University of Wisconsin. He was a pillar of support to my family and me during two difficult years of financial insecurity.

Glenn C. Faith and Peter L. Eichman, Madison, Wisconsin, 1971.

Dr. Faith was a faculty member in the University of Wisconsin Pathology Department and member of the Search Committee headed by the anatomist Otto A. Mortenson in March 1971 to fill the chair in medical history vacated by the resignation of visiting professor Nicolas Mani. Mani had resigned in October 1970 to assume the directorship of the Institute of the History of Medicine at the University of Bonn in Germany. After my invited lecture in Madison on April 8, he was quite enthusiastic about my candidacy; I believe his efforts in my behalf were decisive in my selection.

Just before the end of his administrative term, Dr. Eichman, Dean of the Medical School since 1965 and an internist and neurologist, became involved in the recruitment process. In view of the fact that all my predecessors, including the first professor, Erwin Ackerknecht, who had arrived in 1946, all returned to Germany, Eichmann asked me the rhetorical question: “do you have any ambitions towards a chair of medical history in Europe?” Getting a negative response, he agreed on May 12 to generously offer me a tenured position as Associate Professor in spite of the fact that I had only received by Ph.D. in History from the University of Chicago a few months earlier. His enthusiasm and commitment following our frank exchange of ideas about the future of medical history in Madison laid the foundations for reactivating this departmental unit, develop new elective courses for medical students and eventually offer a limited number of fellowships for graduate studies. The unit continues to flourish in the 21st century.

Ralph H. Kellogg and Rudi Schmid, San Francisco, 1984-2000.

A University of California, San Francisco Professor of Physiology and an avid student of history among his broad spectrum of interests, Ralph H. Kellogg, (1920-2009) had been appointed in 1984 to be a member of the Search Committee for a new chair for the Department of the History and Philosophy of Health Sciences at his institution. In that capacity, he repeatedly encouraged me to apply for the position. After my appointment and our arrival in July 1985, he generously guided our first steps in the enchanting city of San Francisco. When we managed to purchase a home across the street from his house, Kellogg also became a friendly neighbor, hosting occasional dinners and meetings. He also offered sage advice concerning administrative matters and supported critical departmental rebuilding efforts.

Dean of UCSF’s Medical School since 1983, Rudi Schmid (1922-2007) was an early supporter of my candidacy for Professor and Chair of the Department of the History of Medicine. A world-famous expert on liver diseases, the Swiss-born researcher and teacher was eager to put his personal stamp on the UCSF faculty, appointing more than a dozen of departmental chairs during his highly successful administrative tenure that unfortunately ended in 1989. Our personal relationship flourished because of his unique interdisciplinary approach and internationalism. We bonded further because of our experiences at the University of Chicago where he was a professor during my years on that campus pursuing a PH.D. degree; he had also been at the University of Minnesota Medical School and knew Owen Wangensteen. From the very start, Dr. Schmid and his Executive Assistant Dean Valli McDougle became strong supporters of the Department and soon our friendship extended beyond the purely administrative formalities. Indeed, my wife and I had the pleasure of attending a number of Rudi Schmid’s evening soirees at his beautiful home and garden at Kentfield in Marin County.

Family

Last but not least, I would like to acknowledge the critical roles played by my loving wife Alexandra (Sandy) as well as my three children. Without their support, most of the above steps in my career would not have been possible. From the very start, Sandy became a faithful companion and advisor. After our wedding in Detroit in October 1961, she strongly supported my earlier plan to move and live in Argentina that proved frustrating and futile. Our stay in Buenos Aires sadly ended a few months later with a return to the US. Her citizenship and problematic pregnancy determined my fate, giving me the chance of a permanent visa and possible medical career in America. A year later, Sandy bravely signed on to my unexpected quest for an academic career, an uncommon shift with significant social and economic implications for our future. More importantly, while I resumed the role of graduate student, this change created a growing burden of domestic and childrearing responsibilities for her. Juggling the needs of a growing family, the initial years in suburban Chicago were quite difficult, but with Sandy’s steady encouragement and hard work we succeeded. Purpose and common sense prevailed. Because of dwindling academic positions in my field of expertise, the ensuing moves, first to Minnesota, then Wisconsin, and ultimately San Francisco all required flexibility, understanding, and above all careful planning.

During an especially stressful time in Minnesota in 1981 when it appeared that my position of assistant professor financed by an extramural grant would no longer be funded, Sandy immediately started preparations for returning to her previous nursing career, ready to contribute financially. Later, when I obtained an appointment in Wisconsin and moved to Madison, she was left alone not only to manage our Minneapolis household but also organize and execute the sale and subsequent interstate move.

In the 14 years in Madison and later the 15 years in San Francisco while I was the senior professor and departmental chair, Sandy managed to organize and host a colossal number of social functions, receptions, and dinners with graduate students, faculty members, and visiting scholars in addition to her weaving and volunteering at the California Marine Mammal Center and State Parks. Finally, to celebrate my retirement and 70th birthday, she was instrumental in organizing a special luncheon at the American Association of the History of Medicine meeting in Kansas City, MO to give colleagues and friends a chance for a typical roast.

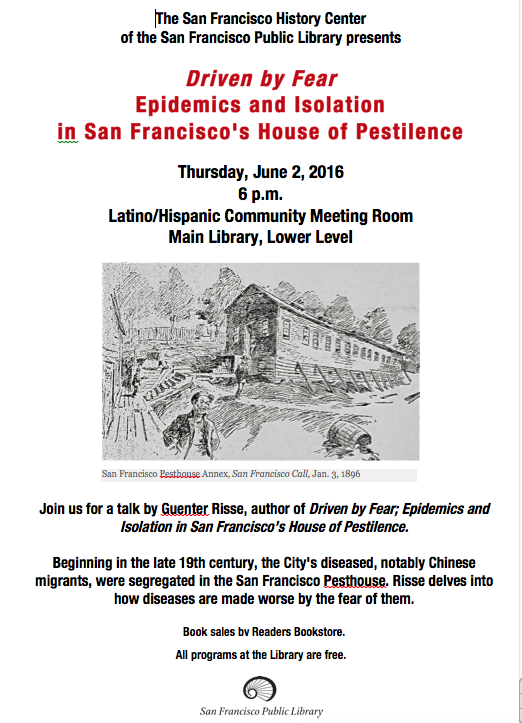

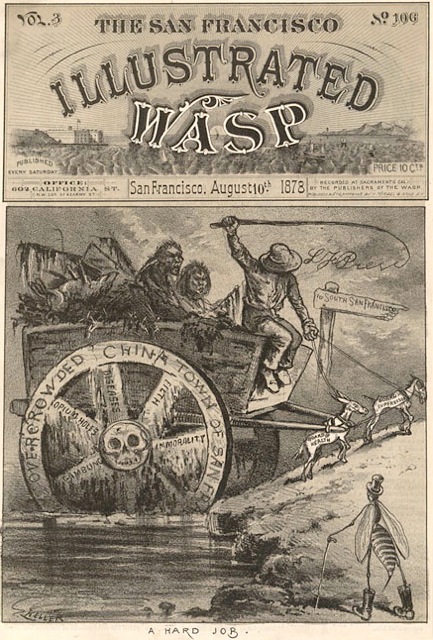

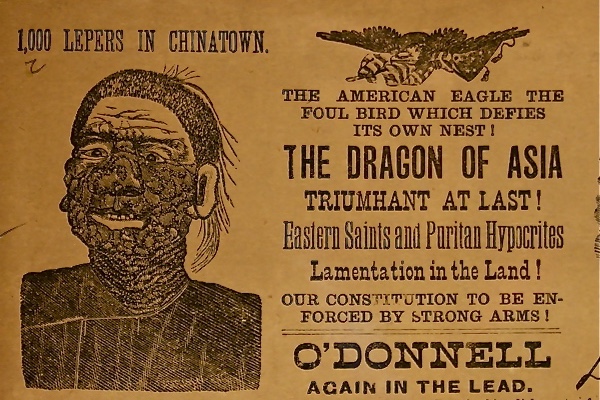

Finally, I would like to acknowledge the contributions of my three daughters Heidi, Monica and Lisa. Change can often be difficult and frustrating. Searching for adequate housing, making new friends, and finding adequate schools for our children took courage, patience, good will and time. Their acceptance of our multiple moves through the Midwest at key points of their schooling and socializing demanded by my academic career was admirable. As they grew older, help included chores related to my scholarly writing. I will never forget the Christmas holiday season of 1998 when the entire family created a general index for my 700-page book on hospital history after the graduate student hired for the task unexpectedly quit. Sitting around the tree and wrapped presents, my girls shouted and shuffled their cards to meet the publisher’s final deadline. Much was at stake: after nearly ten years of research and writing, the book needed to materialize before the next millennium. Editorial help was always forthcoming, especially from Lisa who read my last two books on Chinatown. Monica worked with illustrations and created Power Point presentations. Heidi created and still maintains my private website.

Under the rubric family, I also wish to point out the important role played by neighbors, personal friends, colleagues, and students in each of the venues I had the privilege to interact with them. Along the way they vastly enriched our lives. An apt and humorous summary of my odyssey can be found in the lyrics of a song composed in Madison, Wisconsin on the occasion of my academic move to San Francisco in 1985.

LOST TO SAN FRANCISCO: AN ACADEMIC LAMENT

(Song to the tune of My Darling Clementine)

Chorus:

Oh my Guenter

Oh my Guenter

Gone to Frisco far away

You are lost but not forgotten

Gone to Frisco far away

From the Pampas

Came to Buffalo

Where the snow fell everyday

To Chicago

Studied mummies

But medical history held sway

(Chorus)

Then to Minnie

Minnie-apple

Where Lord Leonard had his way

With his ste-tos-

scope he learned

What your plans were every day

(Chorus)

From Minneapolis

You came to Madison

Athens of the Middle West

Brought in Ronald

And then Judy

Made medical history the very best

(Chorus)

TV Lenny

And his warehouse

Kept you warm and entertained

But the call from

San Francisco

Was too loud to be detained

(Chorus)

AIDS and earthquakes

Not withstanding

You leave our Athens

For the West

You are lost but not forgotten

And we wish you all the best!

(Chorus)